The first few seconds of this video are deliberately black screened. Do not adjust your television set.

Over the passed several years, miniturisation in electronics has resulted in the ability to move computing from the desktop or laptop computer and position processing power and sensors directly within interactive objects. This concept or phenomenon is sometimes called pervasive computing. It means that, as Human Computer Interaction (HCI) designers, we have the opportunity to rethink the way we interact with artefacts that rely on digital processing to do their job. We can break free from the overtly machine like nature of interfaces, and create something more instinctive and ‘natural’.

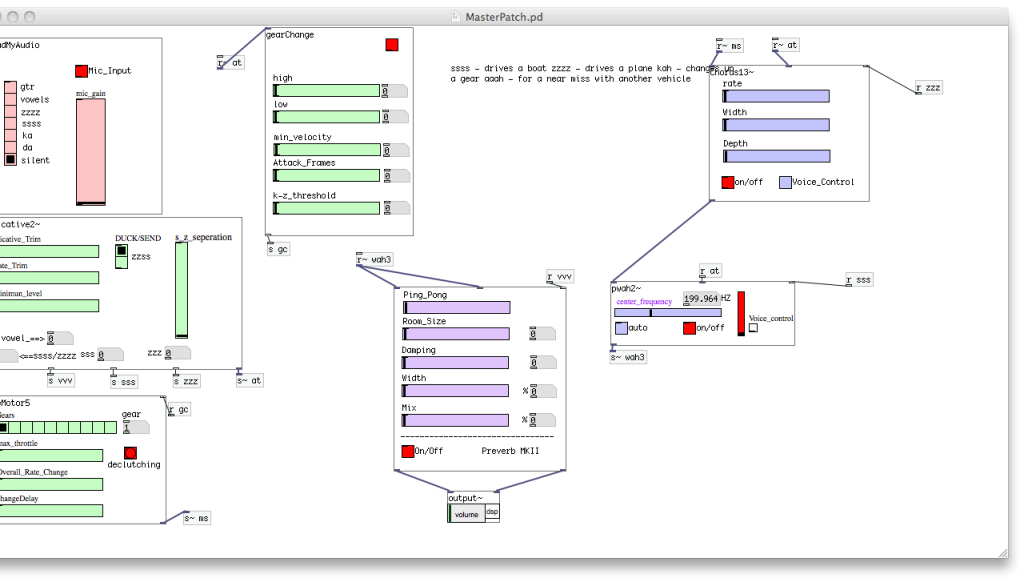

It’s my contention that digital musical instruments are no exception. In this, my master’s thesis (for which I proudly received a 12) I created a mash up of a digitally augmented snare drum. Aside from changing between presets, all interaction is based on accepted techniques for acoustic drums, and all the sound is processed, synthesised, amplified and transmitted from within the drum itself.

Evaluation showed, as with all musical instruments, personal tastes vary, but new performance capabilities were clearly identified without the need to learn new techniques or battle with parameter adjustments.